Optimization

The shader described in the first part computes the blur for each pixel by applying the formula:

where is the 2D Gaussian kernel, is the radius, and is the image to process. The definition implies that for each output pixel, pixels are read from the input image.

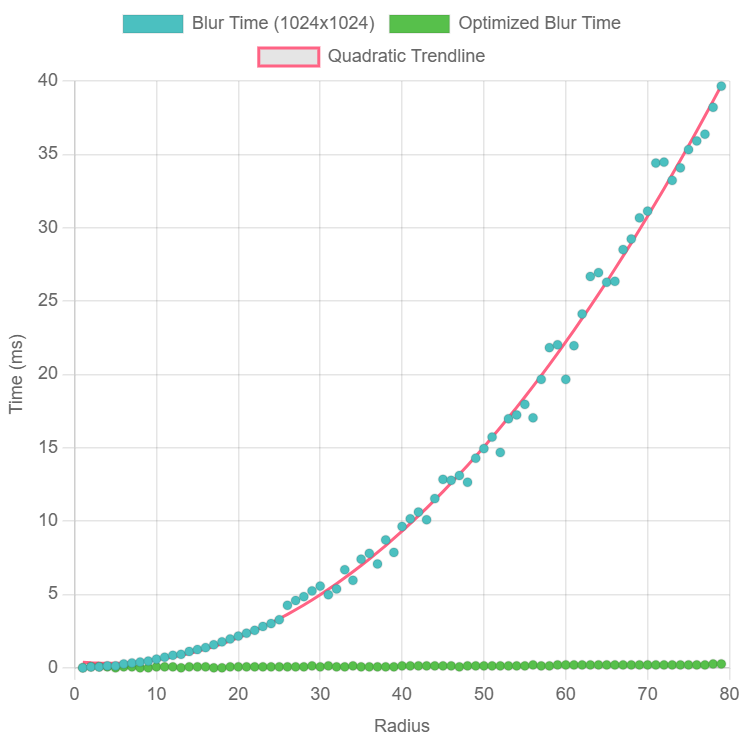

Plotting the execution times as a function of the kernel size, a quadratic trend clearly emerges:

Benchmark performed with NVIDIA GeForce RTX 4080 Laptop GPU

In this article, we will see how to leverage a particular property of the Gaussian kernel to compute more efficiently.

#Separable kernels

A kernel is called separable if it can be expressed as the product of two independent functions:

The Gaussian kernel has this property, indeed:

The independent factors and are both 1D Gaussian kernels.

#Discrete convolution

We define the discrete convolution of with respect to as the operator:

Given a one-dimensional kernel , we define the partial convolution with respect to the x-axis (or horizontal convolution) of with respect to :

Similarly, we define the partial convolution with respect to the y-axis (or vertical convolution) of with respect to :

#Convolution of separable kernels

Computing is equivalent to the discrete convolution of with the Gaussian kernel :

Since the Gaussian kernel is separable, we can rewrite:

The factor is constant with respect to the inner sum, so we can move it outside:

The inner sum represents the pixel of the vertical convolution of with respect to :

Substituting:

The remaining sum is the horizontal convolution of with respect to kernel :

The full convolution is therefore equivalent to:

- Applying a vertical convolution with kernel .

- Subsequently, applying a horizontal convolution with kernel (or vice versa, by swapping the order of the sums).

Since each partial convolution has complexity , the combined computation has complexity per pixel, thus achieving a significant advantage over the direct computation, which has complexity per pixel.

#Implementation

The optimized version of the Gaussian filter is implemented in the Gauss2dBlurOptimized class, which maintains the same

interface as Gauss2dBlur. The main difference lies in the use of two distinct passes to apply the

partial convolutions, leveraging an intermediate working texture.

#Working texture

To handle the two partial convolutions, the class uses an intermediate working texture, represented by the private

property workingTexture. The texture is dynamically allocated and managed based on the input texture size,

as shown in the following code:

export class Gauss2dBlurOptimized { /* ...Same as Gauss2dBlur... */ private workingTexture: GPUTexture | undefined;

private ensureTextures( inputTexture: GPUTexture ) { if (this.needsRecreation(inputTexture)) { this.destroyTextures();

console.log("Creating textures..."); this.workingTexture = this.device.createTexture({ size: { width: inputTexture.width, height: inputTexture.height }, format: inputTexture.format, usage: GPUTextureUsage.RENDER_ATTACHMENT | GPUTextureUsage.TEXTURE_BINDING }); } }

private needsRecreation( inputTexture: GPUTexture ) { return !this.workingTexture || this.workingTexture.width < inputTexture.width || this.workingTexture.height < inputTexture.height; }

destroyTextures() { console.log("Destroying textures..."); this.workingTexture?.destroy(); }

destroy() { this.destroyTextures(); this.uniformBuffer?.destroy(); }}The ensureTextures() method checks whether the existing working texture is compatible with the input texture. If

not, it deallocates the previous one and creates a new one. The input texture format cannot change without recreating

the shader, so it is not considered in needsRecreation().

The working texture is destroyed with the destroy() method along with the other allocated resources, or via destroyTextures()

which can be used to free memory without invalidating the class.

#Blur operation

Unlike the basic version, which directly computes the 2D convolution in a single pass, this implementation separates the two convolutions along the axes, using the working texture for the intermediate pass. The following code illustrates the process:

export class Gauss2dBlurOptimized { static async create( device: GPUDevice, inputFormat: GPUTextureFormat, timer?: GPUTimer ) { /* ...Same as Gauss2dBlur... */

// Create uniform buffer for kernel size and blur direction const uniformBuffer = device.createBuffer({ size: 8, usage: GPUBufferUsage.UNIFORM | GPUBufferUsage.COPY_DST });

/* ...Same as Gauss2dBlur... */ }

async blur( inputTexture: GPUTexture, kernelRadius: number, outputTexture?: GPUTexture ): Promise<GPUTexture> { /* ...Same as Gauss2dBlur... */

// Ensure that working texture is usable this.ensureTextures(inputTexture);

// Perform two 1D pass blur this.gaussBlur1dPass( inputTexture, this.workingTexture!, kernelRadius, 0 ); this.gaussBlur1dPass( this.workingTexture!, outputTexture, kernelRadius, 1 );

return outputTexture; }

private gaussBlur1dPass( inputTex: GPUTexture, outTex: GPUTexture, kernelRadius: number, blurDirection: 0 | 1 ) { const commandEncoder = this.device.createCommandEncoder();

// Update the uniform buffer this.device.queue.writeBuffer( this.uniformBuffer!, 0, new Int32Array([ kernelRadius, blurDirection ]) );

/* ...Same as Gauss2dBlur... */ }}In the first pass, the horizontal convolution is computed (with blurDirection = 0), and the result is saved

in the working texture. In the second pass, the vertical convolution is performed (with blurDirection = 1),

producing the final image in the output texture. Additionally, the uniform buffer, besides the kernel radius, now

also includes the blur direction, which can be horizontal or vertical.

#Shader

In the fragment shader, depending on the convolution direction, either the blurW() or blurH() function is called to

compute the partial convolution. These functions generate the Gaussian blur kernel and apply the convolution

in the appropriate direction:

function gauss2dBlurShader(format: GPUTextureFormat): string { const formatInfo = TEXTURE_FORMAT_INFO[format]!;

// language=WGSL return ` ```wgsl /*... no changes ...*/

struct Params { kernelRadius: i32,

// 0 = W, 1 = H direction: i32 }

/*... no changes ...*/

fn blurW(coords: vec2i, stdDev: f32) -> ${formatInfo.texelType} { let norm = 1.0 / sqrt(2.0 * PI * stdDev * stdDev);

// Gaussian blur kernel generation var blur = ${castToFloat(formatInfo.texelType)}(0);

// Since we are discretizing the Gaussian kernel // the sum of the samples won't add up perfectly to 1 var weightSum = 0.0f;

for (var i = -params.kernelRadius; i <= params.kernelRadius; i++) { let weight = exp(-(f32(i * i) / (2.0 * stdDev * stdDev))); let I = textureLoad(inputTexture, coords + vec2i(i, 0), 0).${channelMask(formatInfo.channelsCount)}; let gij = norm * weight;

blur += ${castToFloat(formatInfo.texelType)}(I) * gij; weightSum += gij; }

// Normalize the result by dividing by the sum of the weights blur /= weightSum; ${formatInfo.channelsCount === 4 ? "blur.a = 1.0f;" : "" }

return ${formatInfo.texelType}(blur); }

fn blurH(coords: vec2i, stdDev: f32) -> ${formatInfo.texelType} { /*...similar to blurW ...*/ }

@fragment fn fs(@builtin(position) coord: vec4f) -> @location(0) ${formatInfo.texelType} { // 99% of Gaussian values fall within 3 * stdDev // P(mu - 3s <= X <= mu + 3s) = 0.9973 let stdDev = f32(params.kernelRadius) / 3.0;

// Gaussian blur kernel generation let pixelCoords = vec2i(coord.xy - 0.5); var blur: ${formatInfo.texelType}; if (params.direction == 0) { blur = blurW(pixelCoords, stdDev); } else { blur = blurH(pixelCoords, stdDev); }

return blur; } ``` `;}#Benchmarking

WebGPU offers a feature called timestamp queries to measure execution times on the GPU with precision.

To use them, it is necessary to enable the timestamp-query feature when requesting the device:

const device = await adapter.requestDevice({ requiredFeatures: [ 'timestamp-query' ]});#Query allocation

To allocate a set of timestamp queries, the createQuerySet() method is used:

const querySet = device.createQuerySet({ type: 'timestamp', count: 2});To store the query results, a dedicated buffer must be allocated:

const resolveBuffer = device.createBuffer({ size: querySet.count * 8, usage: GPUBufferUsage.QUERY_RESOLVE | GPUBufferUsage.COPY_SRC});The buffer must support QUERY_RESOLVE usage, and each timestamp occupies 8 bytes (equivalent to a BigInt in JavaScript).

To read the timestamps on the CPU, it will be necessary to transfer resolveBuffer into a mappable buffer:

const stagingBuffer = device.createBuffer({ size: resolveBuffer.size, usage: GPUBufferUsage.COPY_DST | GPUBufferUsage.MAP_READ});#Query configuration

Each render pass can record up to two timestamps (start and end), by configuring the timestampWrites parameter at

creation time:

const encoder = device.createCommandEncoder();const renderPass = encoder.beginRenderPass({ timestampWrites: { querySet, beginningOfPassWriteIndex: 0, endOfPassWriteIndex: 1 }});In this example, the start timestamp is saved in querySet[0] while the end timestamp in querySet[1].

#Saving timestamps

After completing the render pass, but before calling finish() on the command encoder, it is necessary to use the

resolveQuerySet() method to write the results to the destination buffer:

// ...draw stuff...renderPass.end();encoder.resolveQuerySet( // queries querySet,

// first query 0,

// query count querySet.count,

// destination resolveBuffer,

// destination offset 0);#Reading results

To access the data on the CPU, the resolveBuffer must be copied to the staging buffer:

encoder.copyBufferToBuffer( resolveBuffer,

// source offset 0,

stagingBuffer,

// destination offset 0,

stagingBuffer.size);

device.queue.submit([ commandEncoder.finish()]);At this point, stagingBuffer is ready to be mapped on the CPU:

try { await stagingBuffer.mapAsync(GPUMapMode.READ); const times = new BigInt64Array( this.stagingBuffer.getMappedRange() ); const elapsedMs = Number(times[1] - times[0]) * 1e-6;} finally { stagingBuffer.unmap();}Timestamps are expressed in nanoseconds and queries can be reused, but it is essential to release the resources when they are no longer needed:

resolveBuffer.destroy();stagingBuffer.destroy();querySet.destroy();#The GPUTimer utility

The GPUTimer utility class simplifies the use of timestamp queries, and can be used as follows:

- Create a timer object from the device:

const timer = new GPUTimer(device);- Add the timer to the render pass:

const commandEncoder = device.createCommandEncoder();const renderPass = commandEncoder.beginRenderPass({ ...passDescriptor, // Adds timestamp measurements ...timer.renderPass});

// ...draw stuff...- Resolve the query set before submit:

// ...draw stuff...renderPass.end();

timer.resolveQuerySet(commandEncoder);device.queue.submit([commandEncoder.finish()]);- Read the duration after submit:

// Later, read the timing:const duration = await timer.read();console.log(`Operation took ${timer.fmtTime(duration)}`);- Release the timer when no longer needed:

timer.destroy();#Final results

With the new implementation, the Gaussian filter is applied in two separate passes, thus optimizing the process:

Benchmark performed with NVIDIA GeForce RTX 4080 Laptop GPU

As can be observed, the new version offers a clear advantage in terms of performance, with a linear trend of the execution time with respect to the kernel size.

#Conclusions and next steps

Gaussian convolution, while being computationally intensive in its direct form, can be optimized through kernel separability, reducing the computational complexity from to .

The effectiveness of the optimization was confirmed through precise measurements of execution times, using the timestamp queries provided by WebGPU.

In the third and final part, we will certify correctness and robustness of the implementation through the creation of a dedicated unit test suite.

Stay tuned! 🚀