Basic implementation

Gaussian blur is a filter that applies a weighted average to the neighboring pixels of an image or texture, using a Gaussian function to determine the weights.

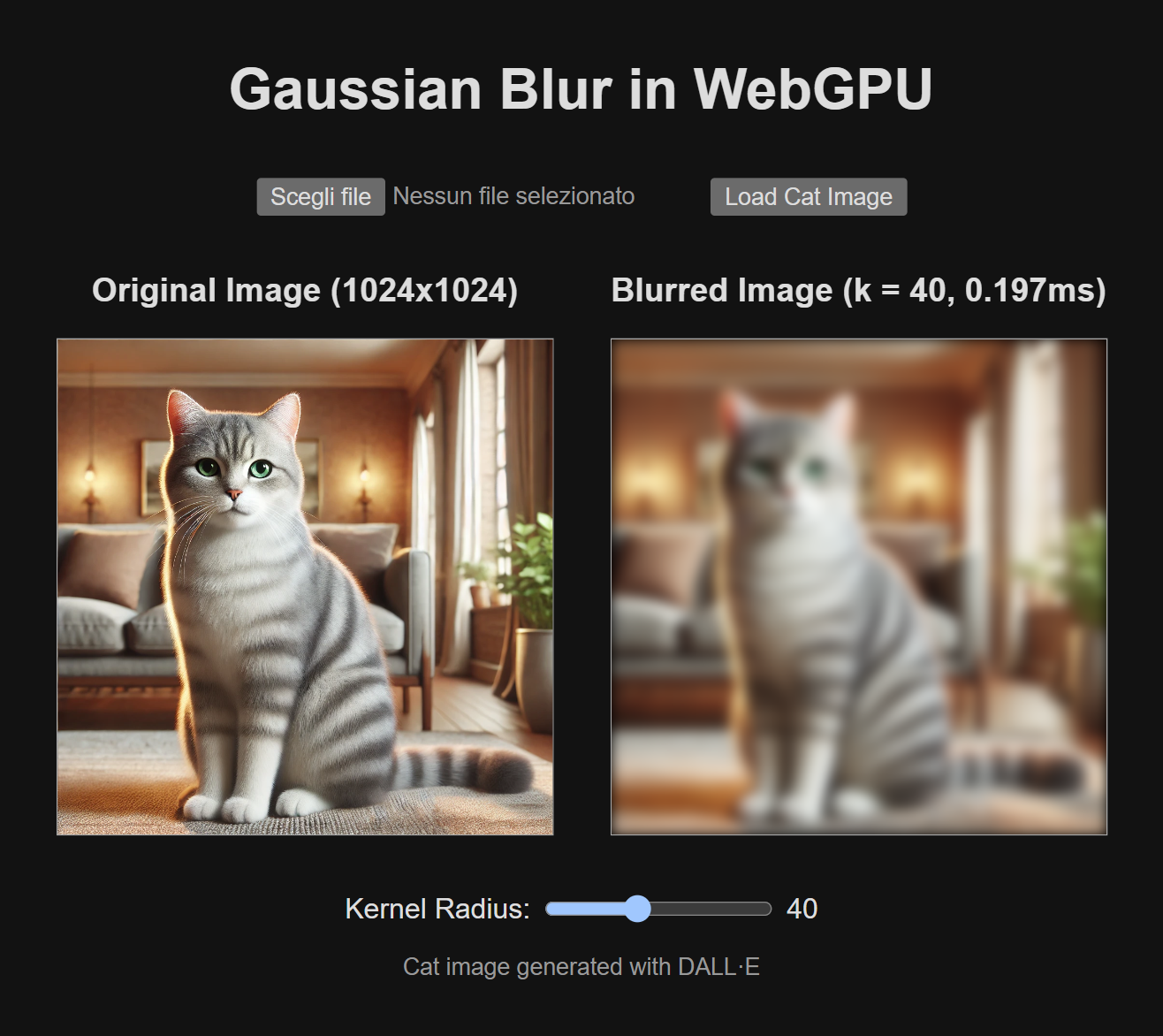

On the right, the image after applying Gaussian blur. Image generated with DALL·E.

The algorithm is based on a matrix called the Gaussian kernel, which represents a normal distribution, and is applied to each pixel of the image. The 2D Gaussian kernel has the following form:

where:

- and are the pixel coordinates relative to the center pixel.

- is the standard deviation of the Gaussian distribution, which determines the blur intensity. The larger is, the more blurred the image will be.

- The exponential function computes the weight for each pixel based on its distance from the center.

A demo of this filter is available on the dedicated page.

#Project structure

The repository is an application built with Vite in TypeScript, along with tests written using QUnit and Jest.

The HTML page provides an interface that allows choosing an image and applying the Gaussian blur in real time, varying the kernel radius .

The implementation goals are the following:

- Support for different texture formats (RGB, RGBA, integer, float, and so on).

- Efficient management of GPU resources.

- Correctness of the result and execution speed.

#Shader management

Instead of writing shaders in separate text files and loading them later in JavaScript, I use functions that return the code as interpolated strings:

function gauss2dBlurShader( format: GPUTextureFormat): string { // language=WGSL return ` .... shader code .... `;}This approach allows taking full advantage of JavaScript’s tools to dynamically generate the code and centralize the common parts between different shaders. The idea is reminiscent of CSS-in-JS solutions, offering similar advantages in terms of modularity and reusability.

#Multiple texture format support

The different texture formats, both as input and as render target, introduce variations in the shader code. A first example concerns the texture declaration itself, which depends on the sample type (ST):

@group(0) @binding(0)var floatTexture: texture_2d<f32>;

@group(0) @binding(1)var uintTexture: texture_2d<u32>;

@group(0) @binding(2)var sintTexture: texture_2d<i32>;Another example is the return type of the fragment shader, which varies depending on the render target format:

@fragmentfn toFloat4() -> @location(0) vec4f

@fragmentfn toFloat3() -> @location(0) vec3f

@fragmentfn toFloat() -> @location(0) f32

// ... other variants for signed and unsigned sample types ...Finally, the way a texture is read depends on the access type, with or without a sampler:

textureSample(inputTexture, sampler, floatCoords)

textureLoad(inputTexture, integerCoords, 0)In particular, integer textures do not support the use of textureSample().

The choice between compute shader and fragment shader may also depend on the format of the texture to process.

Compute shaders, in fact, do not support the use of texture_2d, but require texture_storage_2d, which is only compatible with

a specific subset of formats.

To address these issues, it is useful to define a map that collects the specific information for each format. This information can be used to dynamically generate the shader sections that depend on the format.

export type SampleType = 'f32' | 'u32' | 'i32';

export type TexelType = 'f32' | 'u32' | 'i32' | 'vec2f' | 'vec2u' | 'vec2i' | 'vec3f' | 'vec3u' | 'vec3i' | 'vec4f' | 'vec4u' | 'vec4i';

export type TypedArray = Float32Array | Float64Array6 collapsed lines

| Int8Array | Uint8Array | Uint8ClampedArray | Int16Array | Uint16Array | Int32Array | Uint32Array;

export type TypedArrayConstructor = Float32ArrayConstructor | Float64ArrayConstructor6 collapsed lines

| Int8ArrayConstructor | Uint8ArrayConstructor | Uint8ClampedArrayConstructor | Int16ArrayConstructor | Uint16ArrayConstructor | Int32ArrayConstructor | Uint32ArrayConstructor;

export interface TextureFormatInfo { bytesPerTexel: number; channelsCount: 1 | 2 | 3 | 4; sampleType: SampleType; texelType: TexelType; textureSamplerType: GPUTextureSampleType; typedArrayConstructor: TypedArrayConstructor;}

export const TEXTURE_FORMAT_INFO: { [ texelFormat in GPUTextureFormat ]?: TextureFormatInfo } = { "r32float": { bytesPerTexel: 4, channelsCount: 1, sampleType: 'f32', texelType: 'f32', textureSamplerType: 'float', typedArrayConstructor: Float32Array },16 collapsed lines

"rgba32float": { bytesPerTexel: 16, channelsCount: 4, sampleType: 'f32', texelType: 'vec4f', textureSamplerType: 'float', typedArrayConstructor: Float32Array }, "rgba8uint": { bytesPerTexel: 4, channelsCount: 4, sampleType: 'u32', texelType: 'vec4u', textureSamplerType: 'uint', typedArrayConstructor: Uint8ClampedArray }, "r8uint": { bytesPerTexel: 1, channelsCount: 1, sampleType: 'u32', texelType: 'u32', textureSamplerType: 'uint', typedArrayConstructor: Uint8Array },16 collapsed lines

"rgba8unorm": { bytesPerTexel: 4, channelsCount: 4, sampleType: 'f32', texelType: 'vec4f', textureSamplerType: 'float', typedArrayConstructor: Uint8ClampedArray }, "bgra8unorm": { bytesPerTexel: 4, channelsCount: 4, sampleType: 'f32', texelType: 'vec4f', textureSamplerType: 'float', typedArrayConstructor: Uint8ClampedArray }};

export function castToFloat( texelType: TexelType): TexelType { switch (texelType) { case "f32": return "f32";10 collapsed lines

case "u32": return "f32"; case "i32": return "f32"; case "vec2f": return "vec2f"; case "vec2u": return "vec2f"; case "vec2i": return "vec2f"; case "vec3f": return "vec3f"; case "vec3u": return "vec3f"; case "vec3i": return "vec3f"; case "vec4f": return "vec4f"; case "vec4u": return "vec4f"; case "vec4i": return "vec4f"; }}

export function channelMask( channelCount: 1 | 2 | 3 | 4): string { switch (channelCount) { case 1: return "r"; case 2: return "rg"; case 3: return "rgb"; case 4: return "rgba"; }}The TEXTURE_FORMAT_INFO map associates each texture format with a TextureFormatInfo object, which contains

a set of useful information:

bytesPerTexel: the number of bytes per texel.channelsCount: the number of channels per texel (e.g., 3 for the RGB format).sampleType: the sample type for a single channel (used for typingtexture_2d<>).texelType: the sample type of a texel (e.g.,vec4ffor the RGBA float format).textureSamplerType: the sampling type for the texture (used in binding definitions).typedArrayConstructor: the constructor for a typed array suitable for the texture format.

The castToFloat() and channelMask() functions are designed to handle specific format-related details and will be

covered later.

#Resource management and recycling

To invoke a shader, it is necessary to create and connect several objects, such as buffers, bind groups, and render pipelines. Additionally, Gaussian blur cannot be computed in-place, i.e., by directly overwriting the input texture, but requires creating a separate texture for the output. If the effect needs to be applied repeatedly, for example when the user changes the kernel radius from the slider, it is useful to reuse existing objects rather than recreating them every time.

Another important aspect concerns the management of memory occupied by buffers and textures, which must be explicitly

released by calling the destroy() method on GPUBuffer and GPUTexture objects.

To manage these aspects efficiently, the Gaussian blur implementation is organized into a

Gauss2dBlur class, rather than a simple function. Resources that can be reused between invocations

are maintained as class members:

export class Gauss2dBlur { private readonly device: GPUDevice; private readonly uniformBuffer: GPUBuffer; private readonly renderPipeline: GPURenderPipeline;}The class is created through a static, asynchronous factory method, create(), which takes as input all the parameters that cannot

be changed without having to recreate the reusable resources:

export class Gauss2dBlur { static async create( device: GPUDevice, inputFormat: GPUTextureFormat ) { // Create shader module device.pushErrorScope('validation'); const shaderModule = device.createShaderModule({ code: gauss2dBlurShader(inputFormat) }); const errors = await device.popErrorScope(); if (errors) { throw new Error('Could not compile shader!'); }

// Allocate resources const inputInfo = TEXTURE_FORMAT_INFO[inputFormat]!; const uniformBuffer; const renderPipeline;

/* ...code... */

// Instance return new Gauss2dBlur( device, uniformBuffer, renderPipeline ); }

private constructor( device: GPUDevice, uniformBuffer: GPUBuffer, renderPipeline: GPURenderPipeline ) { // ...set members... }}The shader is dynamically generated based on the input texture format through the gauss2dBlurShader() function.

The creation of the shaderModule does not throw exceptions on errors, so a manual check is required.

Next, the method creates the uniformBuffer for passing parameters to the shader and the renderPipeline, then

instantiates the class through the private constructor, passing the newly created resources.

To apply the effect, there is an asynchronous blur() method that takes as input parameters that can vary without

having to recreate all the resources, such as the texture (as long as it has the same format passed during creation), the kernel

radius, and an optional output texture:

export class GaussBlur2d { async blur( inputTexture: GPUTexture, kernelRadius: number, outputTexture?: GPUTexture ): Promise<GPUTexture> { if (outputTexture) { if (outputTexture.width !== inputTexture.width || outputTexture.height !== inputTexture.height) { throw new Error('Output texture size does not match input texture!'); } if (outputTexture.format !== inputTexture.format) { throw new Error('Output format does not match input format!'); } } else { outputTexture = this.device.createTexture({ size: { width: inputTexture.width, height: inputTexture.height }, format: inputTexture.format, usage: GPUTextureUsage.RENDER_ATTACHMENT | GPUTextureUsage.COPY_SRC }); }

/* ...code... */

return outputTexture; }The method starts by performing some validations on the input parameters. If an output texture is provided, it must have the same format and dimensions as the input texture; otherwise, the method creates one internally, leaving the caller the responsibility of destroying it.

Once this phase is completed, the method performs the rendering by drawing two triangles (6 vertices), using the outputTexture as the render target.

The destroy() method can be called externally to release all resources allocated by the GaussBlur2d class:

export class GaussBlur2d { destroy() { this.uniformBuffer?.destroy(); }}Finally, a utility function is available for immediate use:

export async function gauss2dBlur( device: GPUDevice, inputTexture: GPUTexture, kernelRadius: number, outputTexture?: GPUTexture): Promise<GPUTexture> { const gauss2d = await Gauss2dBlur.create( device, inputTexture.format );

try { return gauss2d.blur( inputTexture, kernelRadius, outputTexture ); } finally { gauss2d.destroy(); }}#Filter implementation

The shader for Gaussian blur uses the texture metadata, computes the blur through a Gaussian kernel, and normalizes the results. The numbers in parentheses refer to the markers in the code below.

function gauss2dBlurShader( format: GPUTextureFormat): string { const formatInfo = TEXTURE_FORMAT_INFO[format]!;

// language=WGSL return ` ```wgsl struct VertexOutput { @builtin(position) position: vec4f };

struct Params { kernelRadius: i32 }

@group(0) @binding(0) var inputTexture: texture_2d<${formatInfo.sampleType}>;

@group(0) @binding(1) var<uniform> params: Params;

@vertex fn vs(@builtin(vertex_index) index: u32) -> VertexOutput { let pos = array<vec2f, 6>( vec2f(-1, 1), vec2f(1, 1), vec2f(1, -1), vec2f(1, -1), vec2f(-1, -1), vec2f(-1, 1) );

var output: VertexOutput; output.position = vec4f(pos[index], 0.0, 1.0); return output; }

const PI: f32 = 3.141592;

@fragment fn fs(@builtin(position) coord: vec4f) -> @location(0) ${formatInfo.texelType} { // 99% of Gaussian values fall within 3 * stdDev // P(mu - 3s <= X <= mu + 3s) = 0.9973 let stdDev = f32(params.kernelRadius) / 3.0;

// Gaussian blur kernel generation let pixelCoords = vec2i(coord.xy - 0.5); let norm = 1.0 / (2.0 * PI * stdDev * stdDev);

// Gaussian blur kernel generation var blur = ${castToFloat(formatInfo.texelType)}(0);

// Since we are discretizing the Gaussian kernel, the sum of the samples won't add up perfectly to 1 var weightSum = 0.0f;

for (var i = -params.kernelRadius; i <= params.kernelRadius; i++) { for (var j = -params.kernelRadius; j <= params.kernelRadius; j++) { let offset = vec2f(f32(i), f32(j)); let weight = exp(-(dot(offset, offset) / (2.0 * stdDev * stdDev))); let I = textureLoad(inputTexture, pixelCoords + vec2i(i, j), 0).${channelMask(formatInfo.channelsCount)}; let gij = norm * weight;

blur += ${castToFloat(formatInfo.texelType)}(I) * gij; weightSum += gij; } }

// Normalize the result by dividing by the sum of the weights blur /= weightSum; ${formatInfo.channelsCount === 4 ? "blur.a = 1.0f;" : "" }

return ${formatInfo.texelType}(blur); } ````;}#Input format handling

The method uses the TEXTURE_FORMAT_INFO map (1) to dynamically adapt the shader code to different texture formats.

This map provides information about the texture sample type, used to define the dynamic type of inputTexture (2),

and about the texel type, which determines the return type of the fragment shader (4).

The vertex shader generates a quad covering the entire render target using six vertices (3).

#Blur computation

The Gaussian blur is computed using a discrete kernel.

The pixel value at position after applying the Gaussian filter is given by the sum of pixel values weighted according to the kernel:

where:

- is the pixel value at position in the original image.

- is the Gaussian kernel value for position .

- is half the kernel size (e.g., if the kernel is , then ).

The standard deviation is determined as one third of the kernel radius (5), a choice that exploits the statistical property of the normal distribution according to which:

This parameter is used to precompute the normalization coefficient (6), which is constant.

For the blur computation, all values are treated as floating-point numbers.

Data read from the texture is converted to float using the castToFloat() utility (7, 10). This step is necessary because

the input texture could be integer rather than floating-point.

Furthermore, the textureLoad() function always returns a 4-component vector, regardless of the actual number of

texture channels. This can cause a compilation error when the input texture (and, consequently, the type of blur)

does not have 4 channels.

To avoid this mismatch, the required channels are selectively extracted using the channelMask() function (8).

This function allows selecting only the channels corresponding to the input format, adapting the fixed number of components

from textureLoad() to those of the blur variable.

During the iteration over surrounding pixels, the weight of each sample is computed based on the distance from the kernel center (9). The total sum of weights (11) is also tracked, as it is needed to normalize the final result.

#Normalization and output

Since the Gaussian kernel is discretely sampled, the sum of the weights does not exactly equal 1. For this reason, the blur result is normalized by dividing by the computed weight sum (12). For textures that include an alpha channel, this channel’s value is fixed to 1 (13), thus preserving transparency.

Finally, the resulting floating-point value is converted back to the original texture type (14), in order to prevent compilation errors.

#Conclusions and next steps

In this article, we saw how to implement the Gaussian filter, supporting different input formats and correctly managing resources. In the next part, we will focus on an important optimization of the convolution computation and on how to accurately measure shader execution times.