Testing and benchmarking

In the first two articles, we developed, optimized, and profiled a Gaussian blur algorithm based on WebGPU. Thanks to a dedicated user interface, it is possible to observe the effect applied in real time. However, a simple visual inspection is not sufficient to answer several questions:

- Does the fragment shader correctly compute the convolution according to the Gaussian kernel?

- Does the optimized two-pass version produce the same result as the standard implementation?

- Does the shader adapt correctly to different texture formats?

A unit test suite can provide clear answers to these questions, while also ensuring the correct functioning of the code over time, in case of future changes. There is, however, a problem: WebGPU is not supported in a Node.js environment, not even with the help of libraries like jsdom, which only partially simulates browser behavior.

#Project structure

QUnit is a testing framework that can run everywhere, including natively in the browser. This characteristic makes it ideal for testing WebGPU-based code, since in the browser we can access the necessary APIs without limitations.

The GitHub project organizes the source code and tests in two separate folders,

src/ and test/, following a convention similar to the one commonly used in Java.

src/ filter/ gauss_blur_2d.ts gauss_blur_2d_optimized.ts utils/ math.ts polynomial-regression.ts range.ts slice_matrix.ts webgpu/ generate_texture.ts read_texture.ts texture_metadata.ts timer.ts async_process_handler.ts main.ts vite-env.d.tstest/ browser/ filter/ gauss_blur_2d.qunit.ts utils/ texture_assert.ts webgpu/ read_texture.qunit.ts index.html main.qunit.ts qunit.css vite.config.ts vite-env.d.ts node/ utils/ math.spec.ts polynomial-regression.spec.ts range.spec.ts slice_matrix.spec.ts jest.config.js resources/ .gitkeep gauss_blur.py...index.htmlpackage.jsonstyle.csstsconfig.jsonvite.config.tsThe test folder is organized into three main subfolders:

browser/: includes tests that run in the browser using QUnit.node/: contains tests that run in a Node.js environment with Jest.resources/: collects external data used as resources by the tests.

Test files use a dedicated suffix, such as .qunit.ts and .spec.ts, and follow a structure that mirrors

the source folder. For example, the file test/browser/gauss_blur_2d.spec.ts contains the tests for

src/gauss_blur_2d.ts and src/gauss_blur_2d_optimized.ts, since in this case it is convenient to test together the two closely

related functionalities. Similarly, the file src/utils/slice_matrix.ts is tested in node/utils/slice_matrix.spec.ts.

For simplicity, the QUnit and Jest configuration files are placed within their respective folders, rather than in the project root. This way, each framework remains self-contained and easily identifiable.

#QUnit setup

To use QUnit, the required dependencies must be installed. With pnpm, for example, the command is as follows:

pnpm add -D qunit @types/qunitThe test/browser/vite.config.ts file contains the Vite configuration needed to transpile the tests:

import { defineConfig } from 'vite';import path from "path";

export default defineConfig({ resolve: { alias: { '@': path.resolve(__dirname, '../../src'), '@test': path.resolve(__dirname, '../'), '@resources': path.resolve(__dirname, '../resources') } }, root: './test/browser', server: { port: 4000, open: false }, build: { outDir: 'dist/tests', emptyOutDir: true }});The configuration defines an @ alias that points to the source folder, an @resources alias for the

resources folder, and a @test alias that points to the test folder.

The default entry point for compilation is the test/browser/index.html file:

<!DOCTYPE html><html lang="en"><head> <meta charset="UTF-8"> <title>QUnit Tests</title></head><body> <div id="qunit"></div> <div id="qunit-fixture"></div> <script type="module" src="./main.qunit.ts"></script></body></html>The div with id qunit is used by the framework to inject its user interface.

The div with id qunit-fixture, instead, can be used by tests to create DOM elements. However, it is important to keep

in mind that this div is always emptied between tests. Therefore, it is not suitable for displaying results,

such as the texture obtained by applying Gaussian blur.

The test/browser/main.qunit.ts file includes the QUnit framework and its stylesheet:

import { test } from "qunit";import './qunit.css';

test("QUnit works", assert => { // Expect 0 assertions in this test assert.expect(0);});The QUnit stylesheet can be copied from the node_modules/ directory to the browser test folder with

the following command:

cp node_modules/qunit/qunit/qunit.css test/browserTo simplify test execution, the package.json file defines scripts:

{ "scripts": { "test:node": "jest --config test/node/jest.config.js", "test:browser": "vite --config test/browser/vite.config.ts" }}QUnit tests are run by executing the command npm run test:browser, then visiting the address http://localhost:4000/ in the

browser.

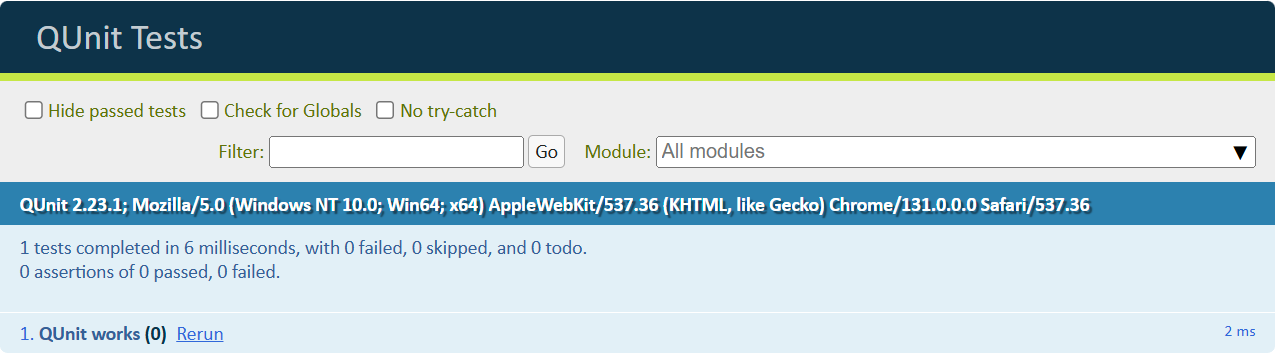

QUnit Web interface

With these steps, the QUnit setup is complete.

#Using QUnit

In QUnit, each test is defined by the test() function:

import { test } from 'qunit';

test("QUnit works", assert => { assert.expect(0);});The test function accepts two parameters: the test name and an anonymous function that encapsulates the actual test.

The assert parameter is an object that provides several methods for making assertions. In the example, the expect() method

serves to declare the number of assertions expected to be executed during the test.

Related tests can be grouped into modules using the module() function:

import { module } from 'qunit';

module("Math is not an opinion", hooks => { test("Basic algebra", assert => { assert.equal(2 + 2, 4, "sum works"); assert.equal(2 - 3, -1, "difference works"); });

test("Trigonometry", assert => { assert.equal(Math.cos(Math.PI), -1, "cosine works"); assert.true(Math.sin(Math.PI / 4) > 0, "sine works"); });});Tests can use async/await to handle asynchronous operations:

import { module } from 'qunit';

module("WebGPU", hooks => { test("is available", async assert => { assert.ok(navigator.gpu, "WebGPU is supported");

const adapter = await navigator.gpu.requestAdapter(); assert.ok(adapter, "Able to request GPU adapter"); });});The hooks object passed to the module provides access to the test lifecycle, allowing code to be executed at specific

times before and after test execution:

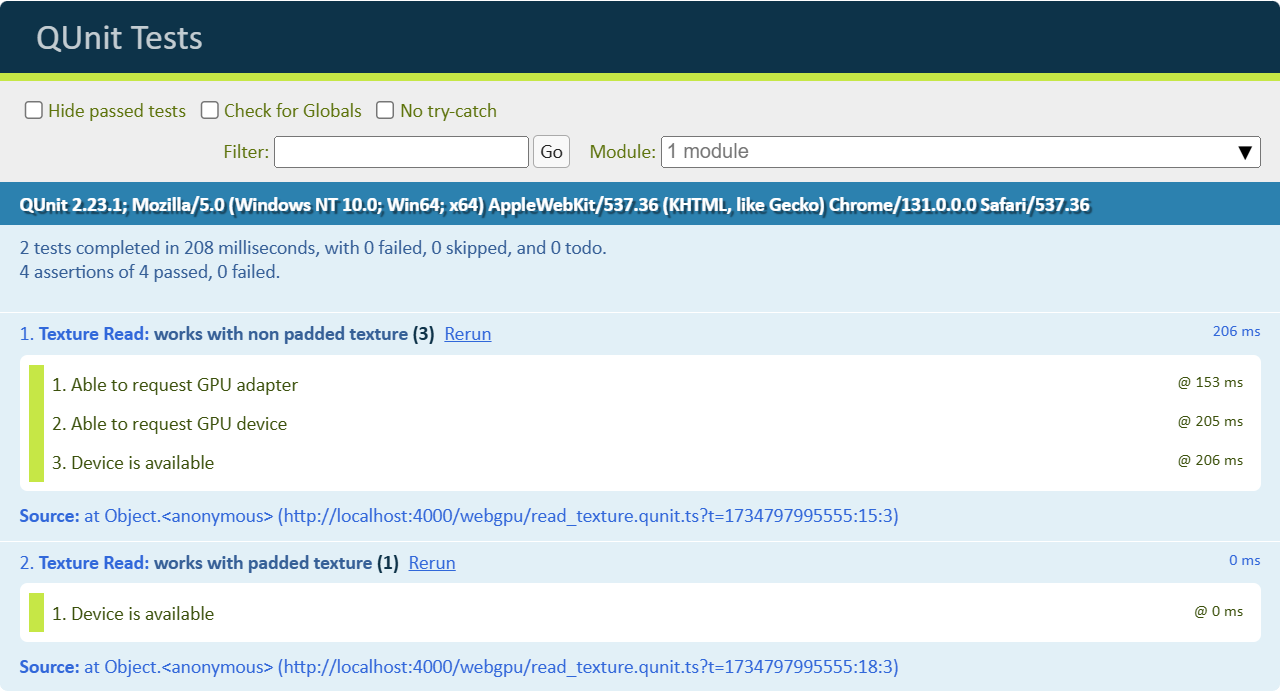

module("Texture Read", hooks => { let adapter: GPUAdapter; let device: GPUDevice;

hooks.before(async (assert) => { // Request the GPU adapter adapter = (await navigator.gpu.requestAdapter())!; assert.ok(adapter, "Able to request GPU adapter");

// Request the GPU device device = await adapter.requestDevice(); assert.ok(device, "Able to request GPU device"); });

test("works with non padded texture", async (assert) => { // "device" can be used });

test("works with padded texture", async (assert) => { // "device" can be used also here });});In addition to before, which runs before all tests, after, beforeEach, and afterEach are also available to

handle operations to be executed respectively after all tests, before each test, or after each test.

The assertions contained in before are executed only once.

#Texture utilities

The tests for Gaussian blur have the following objectives:

- Verify the correct functioning of the basic implementation;

- Verify the correct functioning of the optimized implementation;

- Verify the correct functioning with different texture formats;

During the tests, it will be frequently necessary to:

- Create textures to provide as input.

- Transfer the computed textures from the GPU to the CPU.

- Compare the texture contents with expected values.

Since these operations are common and require several steps, it is appropriate to create utility methods to make the tests more readable and facilitate their maintenance.

#Texture generation

The generateTexture() function is dedicated to generating a texture from predefined data:

import { TEXTURE_FORMAT_INFO, TypedArray } from '@/texture_metadata.ts';

export function generateTexture( device: GPUDevice, format: GPUTextureFormat, width: number, height: number, data: TypedArray, usage: GPUBufferUsageFlags, label: string): GPUTexture { const { bytesPerTexel } = TEXTURE_FORMAT_INFO[format]; const bytesPerRow = bytesPerTexel * width; const texture = device.createTexture({ size: { width, height }, format, usage: GPUTextureUsage.COPY_DST | usage, label });

device.queue.writeTexture( { texture }, data, { bytesPerRow, rowsPerImage: height }, [ width, height ] );

return texture;}This function allocates memory with createTexture() and then transfers the data buffer to the GPU using

writeTexture(). The TEXTURE_FORMAT_INFO metadata provides the number of bytes per texel, needed to calculate

the total number of bytes per row required by writeTexture().

In tests, it can be used as follows:

test('Texture', assert => { const data = new Float32Array([ 1, 2, 3, 4, 5, 6, 7, 8, 9 ]);

let inputTexture: GPUTexture | undefined; try { inputTexture = generateTexture( device, "r32float", 3, 3, data, GPUTextureUsage.TEXTURE_BINDING | GPUTextureUsage.COPY_SRC, "inputTexture" ); } finally { inputTexture?.destroy(); }});In this example, the generateTexture() function is used to create a 3x3 pixel texture with a Float32Array data array.

After using the texture, it is destroyed to release the resources.

#Reading a texture

To transfer the contents of a texture from the GPU to the CPU, the following steps are required:

- Create a staging buffer, configured to be mappable on the CPU.

const stagingBuffer = device.createBuffer({ size: bytesPerRow * texture.height, usage: GPUBufferUsage.MAP_READ | GPUBufferUsage.COPY_DST});- Copy the texture contents to the staging buffer using the

copyTextureToBuffer()method.

const encoder: GPUCommandEncoder = device.createCommandEncoder();encoder.copyTextureToBuffer( { texture }, { buffer: stagingBuffer, bytesPerRow }, { width: texture.width, height: texture.height, depthOrArrayLayers: 1 });const commandBuffer = encoder.finish();device.queue.submit([commandBuffer]);- Map the staging buffer into RAM to access it from the CPU.

await stagingBuffer.mapAsync(GPUMapMode.READ);const mappedRange: ArrayBuffer = stagingBuffer.getMappedRange();- Create a copy of the necessary data.

// The slice() method of ArrayBuffer instances returns a new ArrayBuffer// whose contents are a copy of this ArrayBuffer's bytes.const mappedRangeDeepCopy = mappedRange.slice(0);

// When called with an ArrayBuffer instance,// a new typed array view is created that views the specified buffer.return new typedArrayConstructor(mappedRangeDeepCopy);- Unmap the buffer.

stagingBuffer.unmap();- Destroy the staging buffer to release GPU memory.

stagingBuffer.destroy();In this case too, it is appropriate to encapsulate the entire process within a reusable utility function.

import { TEXTURE_FORMAT_INFO, TypedArray } from '@/texture_metadata.ts';

export async function readTextureData( device: GPUDevice, texture: GPUTexture): Promise<TypedArray> { let stagingBuffer: GPUBuffer; try { const { bytesPerTexel, typedArrayConstructor } = TEXTURE_FORMAT_INFO[texture.format]; const bytesPerRow = bytesPerTexel * texture.width;

// 1) Create a staging buffer, configured to be mappable on the CPU stagingBuffer = device.createBuffer({ label: `stagingBuffer(${texture.format})`, size: bytesPerRow * texture.height, usage: GPUBufferUsage.MAP_READ | GPUBufferUsage.COPY_DST });

30 collapsed lines

// 2) Copy the texture contents to the staging buffer using // the copyTextureToBuffer() method const encoder: GPUCommandEncoder = device.createCommandEncoder( { label: `readTextureData(${texture.format})` } ); encoder.copyTextureToBuffer( { texture }, { buffer: stagingBuffer, bytesPerRow }, { width: texture.width, height: texture.height, depthOrArrayLayers: 1 } ); const commandBuffer = encoder.finish(); device.queue.submit([commandBuffer]);

// 3) Map the staging buffer into RAM await stagingBuffer.mapAsync(GPUMapMode.READ); const mappedRange: ArrayBuffer = stagingBuffer.getMappedRange();

// 4) Create a copy of the necessary data // The slice() method of ArrayBuffer instances returns a new ArrayBuffer // whose contents are a copy of this ArrayBuffer's bytes. const mappedRangeDeepCopy = mappedRange.slice(0);

// When called with an ArrayBuffer instance, // a new typed array view is created that views the specified buffer. return new typedArrayConstructor(mappedRangeDeepCopy); } finally { if (stagingBuffer) { // 5) Unmap the buffer stagingBuffer.unmap();

// 6) Destroy the staging buffer to release GPU memory stagingBuffer.destroy(); } }}The readTextureData() function is designed to read data from a GPU texture in a generic and flexible way.

To ensure proper resource management, the entire function logic is enclosed in a try-finally block.

This approach ensures that critical operations, such as unmapping (unmap()) and destroying the

staging buffer (destroy()), are executed even in case of errors.

In tests, it can be used as follows:

test("Create and read textures", async (assert) => { const data = new Float32Array( range(1, 1 + 64 * 64) );

let inputTexture: GPUTexture | undefined; try { inputTexture = generateTexture( device, "r32float", 64, 64, data, GPUTextureUsage.TEXTURE_BINDING | GPUTextureUsage.COPY_SRC, "inputTexture" );

const gpuData = await readTextureData(device, inputTexture); assert.deepEqual(gpuData, data, "Data is read back"); } finally { inputTexture?.destroy(); }})#The problem with copyTextureToBuffer()

If you try to read a 5x5 texture:

test("r32float texture", async (hooks) => { let inputTexture: GPUTexture; try { const data = new Float32Array( range(1, 1 + 5 * 5) );

const inputTexture = generateTexture( device, "r32float", 5, 5, data, GPUTextureUsage.TEXTURE_BINDING | GPUTextureUsage.COPY_SRC, "inputTexture" );

const gpuData = await readTextureData(device, inputTexture); assert.deepEqual(gpuData, data, "Data is read back"); } finally { inputTexture?.destroy(); }});You get the following errors:

bytesPerRow (20) is not a multiple of 256.- While encoding [CommandEncoder "readTextureData(r32float)"].CopyTextureToBuffer([Texture "inputTexture"], [Buffer "stagingBuffer(r32float)"], [Extent3D width:5, height:5, depthOrArrayLayers:1]).- While finishing [CommandEncoder "readTextureData(r32float)"].

[Invalid CommandBuffer from CommandEncoder "readTextureData(r32float)"] is invalid.- While calling [Queue].Submit([[Invalid CommandBuffer from CommandEncoder "readTextureData(r32float)"]])For efficiency reasons, WebGPU requires the number of bytes per row to be a multiple of 256. To solve this problem, it is possible to create a staging buffer larger than necessary, so as to satisfy the constraint.

The function pad(v, m) computes the smallest multiple of m greater than or equal to v:

function pad(v: number, m: number) { return Math.ceil(v / m) * m;}| bytesPerRow | pad(bytesPerRow, 256) |

|---|---|

| 20 | 256 |

| 256 | 256 |

| 320 | 512 |

Returning a buffer with padding results in wasted memory and complicates the use of assertions like deepEqual().

To work around this problem, it is necessary to transfer the data from the staging buffer to a buffer of the correct size.

The sliceMatrix function reorganizes the data of a matrix, removing the padding present in the rows.

export function sliceMatrix( matrix: ArrayBuffer, bytesPerRow: number, targetBytesPerRow: number): ArrayBuffer { // Sanity checks if (matrix.byteLength === 0) { throw new Error('Input matrix is empty'); }

if (bytesPerRow <= 0 || targetBytesPerRow <= 0) { throw new Error('Bytes per row must be positive'); }

// Ensure matrix size is evenly divisible by bytes per row if (matrix.byteLength % bytesPerRow !== 0) { throw new Error( `Matrix size (${matrix.byteLength} bytes) is not evenly divisible by bytes per row (${bytesPerRow} bytes)` ); }

// Ensure target bytes per row is not larger than source bytes per row if (targetBytesPerRow > bytesPerRow) { throw new Error( `Target bytes per row (${targetBytesPerRow}) cannot be larger than source bytes per row (${bytesPerRow})` ); }

// Calculate height based on total buffer size and current bytes per row const height = matrix.byteLength / bytesPerRow;

// Create a new buffer with the target bytes per row const slicedMatrix = new ArrayBuffer(height * targetBytesPerRow);

// Create views for the source and destination buffers const sourceView = new Uint8Array(matrix); const destinationView = new Uint8Array(slicedMatrix);

// Iterate through rows and copy the relevant portion for (let row = 0; row < height; row++) { // Source row start and slice const sourceRowStart = row * bytesPerRow; const sourceRowSlice = sourceView.subarray( sourceRowStart, sourceRowStart + targetBytesPerRow );

// Destination row start const destRowStart = row * targetBytesPerRow;

// Copy the slice to the destination destinationView.set(sourceRowSlice, destRowStart); }

return slicedMatrix;}The final version of readTextureData uses sliceMatrix() if necessary, ensuring that the returned data has the correct format.

async function readTextureData( device: GPUDevice, texture: GPUTexture): Promise<TypedArray> { let stagingBuffer: GPUBuffer | undefined = undefined; try { const formatInfo = TEXTURE_FORMAT_INFO[texture.format]!; const bytesPerTexel = formatInfo.bytesPerTexel; const bytesPerRow = pad(bytesPerTexel * texture.width, 256);

20 collapsed lines

// 1) Create a staging buffer, configured to be mappable on the CPU stagingBuffer = device.createBuffer({ label: `stagingBuffer(${texture.format})`, size: bytesPerRow * texture.height, usage: GPUBufferUsage.MAP_READ | GPUBufferUsage.COPY_DST });

// 2) Copy the texture contents to the staging buffer using the copyTextureToBuffer() method const encoder: GPUCommandEncoder = device.createCommandEncoder( { label: `readTextureData(${texture.format})` } ); encoder.copyTextureToBuffer( { texture }, { buffer: stagingBuffer, bytesPerRow }, { width: texture.width, height: texture.height, depthOrArrayLayers: 1 } ); const commandBuffer = encoder.finish(); device.queue.submit([commandBuffer]);

// 3) Map the staging buffer into RAM await stagingBuffer.mapAsync(GPUMapMode.READ); const mappedRange: ArrayBuffer = stagingBuffer.getMappedRange();

// Remove padding if necessary if (bytesPerRow !== bytesPerTexel * texture.width) { return new formatInfo.typedArrayConstructor( sliceMatrix( mappedRange, bytesPerRow, bytesPerTexel * texture.width ) ); }

return new formatInfo.typedArrayConstructor(mappedRange.slice(0)); } finally {7 collapsed lines

if (stagingBuffer) { // 5) Unmap the buffer stagingBuffer.unmap();

// 6) Destroy the staging buffer to release GPU memory stagingBuffer.destroy(); } }}#Gaussian blur tests

With the utilities just described, we have all the tools needed to test the Gaussian blur.

The test suite in test/browser/gauss_blur_2d.qunit.ts uses a series of 5x5 patterns (single point, line, checkerboard,

gradient) to verify both the basic and optimized implementations:

module("Gaussian Blur 2D", hooks => { let adapter: GPUAdapter; let device: GPUDevice;

hooks.before(async (assert) => { // Request the GPU adapter adapter = (await navigator.gpu.requestAdapter())!; assert.ok(adapter, "Able to request GPU adapter");

// Request the GPU device device = await adapter.requestDevice({ requiredFeatures: [ "timestamp-query", "texture-compression-bc", "float32-filterable" ] }); assert.ok(device, "Able to request GPU device"); });

test("point spread", async (assert) => { const inputData = new Uint8Array([ 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 255, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, ]);

const expectedData = new Uint8Array([ 0, 0, 0, 0, 0, 0, 0, 2, 0, 0, 0, 2, 244, 2, 0, 0, 0, 2, 0, 0, 0, 0, 0, 0, 0, ]);

const expectedData2 = new Uint8Array([ 0, 0, 1, 0, 0, 0, 9, 29, 9, 0, 1, 29, 91, 29, 1, 0, 9, 29, 9, 0, 0, 0, 1, 0, 0, ]);

await basicBlur(assert, "r8uint", inputData, expectedData, 1, 1); await basicBlur(assert, "r8uint", inputData, expectedData2, 2, 1); });

test("point spread float", async (assert) => { const inputData = new Float32Array([ 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, ]);

const v0 = 0.00011810348951257765; const v1 = 0.010631335899233818; const v2 = 0.9570022225379944; const expectedData = new Float32Array([ 0, 0, 0, 0, 0, 0, v0, v1, v0, 0, 0, v1, v2, v1, 0, 0, v0, v1, v0, 0, 0, 0, 0, 0, 0 ]);

await basicBlur(assert, "r32float", inputData, expectedData, 1, 1e-7); });

test("horizontal edge", async (assert) => { const inputData = new Uint8Array([ 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 255, 255, 255, 255, 255, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, ]);

const expectedData = new Uint8Array([ 0, 0, 0, 0, 0, 2, 2, 2, 2, 2, 246, 249, 249, 249, 246, 2, 2, 2, 2, 2, 0, 0, 0, 0, 0, ]);

await basicBlur(assert, "r8uint", inputData, expectedData, 1, 1); });

test("checkerboard pattern", async (assert) => { const inputData = new Uint8Array([ 255, 0, 255, 0, 255, 0, 255, 0, 255, 0, 255, 0, 255, 0, 255, 0, 255, 0, 255, 0, 255, 0, 255, 0, 255, ]);

const expectedData = new Uint8Array([ 244, 8, 244, 8, 244, 8, 244, 10, 244, 8, 244, 10, 244, 10, 244, 8, 244, 10, 244, 8, 244, 8, 244, 8, 244, ]);

await basicBlur(assert, "r8uint", inputData, expectedData, 1, 1); });

test("gradient", async (assert) => { const inputData = new Uint8Array([ 0, 50, 100, 150, 200, 0, 50, 100, 150, 200, 0, 50, 100, 150, 200, 0, 50, 100, 150, 200, 0, 50, 100, 150, 200 ]);

const expectedData = new Uint8Array([ 0, 49, 98, 148, 195, 0, 50, 100, 150, 197, 0, 50, 100, 150, 197, 0, 50, 100, 150, 197, 0, 49, 98, 148, 195 ]);

await basicBlur(assert, "r8uint", inputData, expectedData, 1, 1); });

async function basicBlur( assert: Assert, format: GPUTextureFormat, inputData: TypedArray, expectedData: TypedArray, kernelRadius: number, tolerance: number ) { // ... }});The expected data for the tests was generated with a Python script based on Scipy, contained in test/resources/gauss_blur.py.

The basicBlur() function handles the entire test process: first it creates an input texture using the test pattern,

then applies the Gaussian filter with both implementations, and finally compares the result with the expected values,

verifying that the difference falls within the established tolerance limits:

async function basicBlur( assert: Assert, format: GPUTextureFormat, inputData: TypedArray, expectedData: TypedArray, kernelRadius: number, tolerance: number) { let inputTexture: GPUTexture | undefined; let blurredTexture: GPUTexture | undefined; let optimizedBlurredTexture: GPUTexture | undefined; try { inputTexture = generateTexture( device, format, 5, 5, inputData, GPUTextureUsage.TEXTURE_BINDING, "inputTexture" );

blurredTexture = await gauss2dBlur( device, inputTexture, kernelRadius );

optimizedBlurredTexture = await gauss2dBlurOptimized( device, inputTexture, kernelRadius );

const blurredTextureData = await readTextureData( device, blurredTexture );

const optimizedBlurredTextureData = await readTextureData( device, optimizedBlurredTexture );

assert.textureMatches( blurredTextureData, expectedData, 5, 5, 1, tolerance, `gauss2dBlur works (k = ${kernelRadius})` );

assert.textureMatches( optimizedBlurredTextureData, expectedData, 5, 5, 1, tolerance, `gauss2dBlurOptimized works (k = ${kernelRadius})` ); } finally { inputTexture?.destroy(); blurredTexture?.destroy(); optimizedBlurredTexture?.destroy(); }}This approach allows effectively testing the correctness of the implementations, avoiding code duplication between different test cases.

The comparison with texture data is performed using the textureMatches() method, which is a custom extension

of the assert object. Compared to the built-in deepEquals() function, textureMatches() handles comparison with a margin

of error, which is useful when working with floating-point numerical data. Additionally, this method improves error messages in case of mismatch,

formatting the matrices in a more readable way with the formatMatrix() method:

import { TypedArray } from "@/webgpu/texture_metadata.ts";import { formatMatrix } from "@/utils/math.ts";

QUnit.assert.textureMatches = function( actual: TypedArray, expected: TypedArray, width: number, height: number, channelCount: number = 1, tolerance: number = 0, message?: string) { // Verify the result length matches the expected size const expectedSize = width * height * channelCount; const lengthMatches = actual.length === expectedSize; let mismatchIndex = -1; if (lengthMatches) { mismatchIndex = actual.findIndex( (value, index) => Math.abs(value - expected[index]) > tolerance ); }

const textureMatches = lengthMatches && mismatchIndex === -1;

let actualMsg: string, expectedMsg: string; if (width * height <= 100) { actualMsg = formatMatrix(actual, channelCount, width, height, '\t'); expectedMsg = formatMatrix(expected, channelCount, width, height, '\t'); } else if (mismatchIndex !== -1) { actualMsg = `length: ${actual.length}, actual[${mismatchIndex}] = ${actual[mismatchIndex]}`; expectedMsg = `length: ${expected.length}, expected[${mismatchIndex}] = ${expected[mismatchIndex]}`; } else { actualMsg = `length: ${actual.length}`; expectedMsg = `length: ${expected.length}`; }

this.pushResult({ result: textureMatches, actual: actualMsg, expected: expectedMsg, message: message || `Texture data matches` });}For typing, it is sufficient to extend the Assert interface in a .d.ts file:

/// <reference types="vite/client" />/// <reference types="qunit" />declare global { interface Assert { textureMatches( actual: TypedArray, expected: TypedArray, width: number, height: number, channelCount: number, tolerance: number, message?: string ): void; }}

export {};It is important to include this file in the main entry point, along with the imports of other tests, as shown in the following example:

import { test, module } from "qunit";import "@test/browser/filter/gauss_blur_2d.qunit.ts";import "@test/browser/webgpu/read_texture.qunit.ts";import "@test/browser/utils/texture_assert.ts";import '@test/browser/qunit.css';

module("WebGPU", hooks => { test("is available", async (assert) => { assert.ok(navigator.gpu, "WebGPU is supported");

const adapter = await navigator.gpu.requestAdapter(); assert.ok(adapter, "Able to request GPU adapter");

assert.ok(true, `Vendor: ${adapter!.info.vendor}`); assert.ok(true, `Device: ${adapter!.info.device}`); assert.ok(true, `Description: ${adapter!.info.description}`); assert.ok(true, `Architecture: ${adapter!.info.architecture}`); });});This way, the file with the Assert interface extension is correctly included and made available for

use in the test functions.

#Conclusion

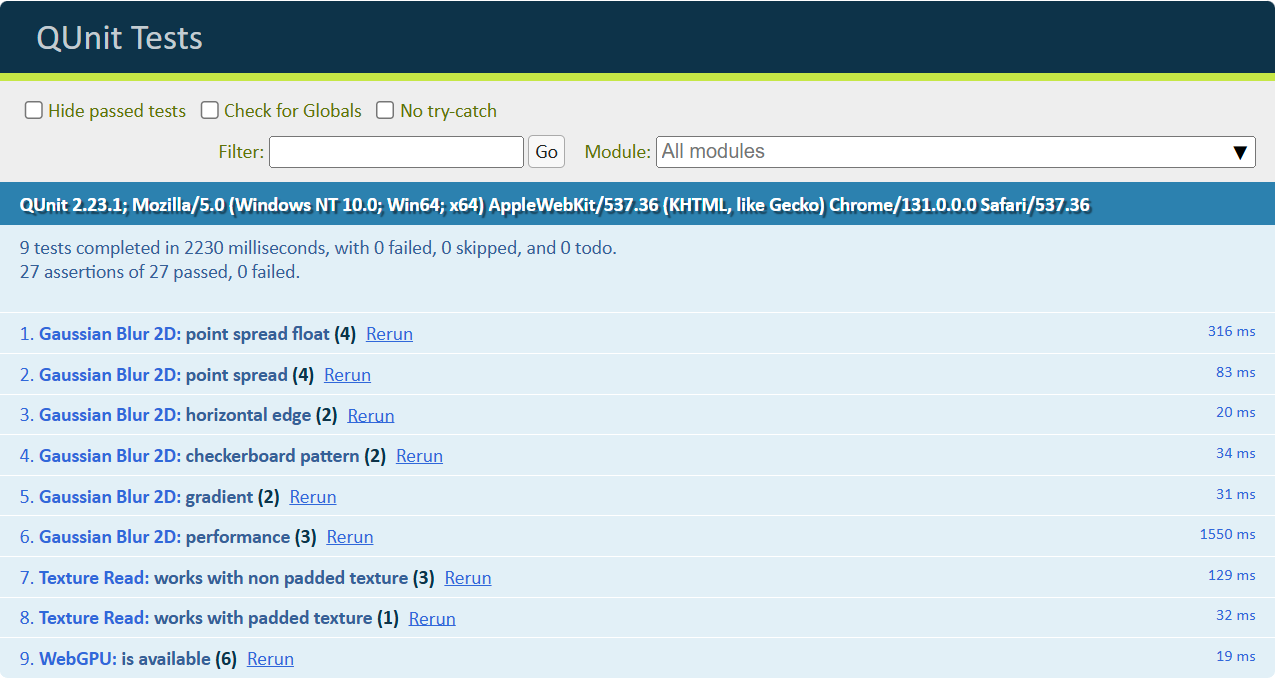

In this article, we saw how to test WebGPU code in the browser using the QUnit framework.

The complete tests.

The utility functions discussed are particularly useful when you want to test WebGPU-based code that makes use of textures.